Fraud in payments has always adapted to new technology, but in 2026 the pace of change has become difficult to ignore. Fraudsters now use artificial intelligence to generate identities, scale attacks, and imitate genuine customer behaviour, while payment providers rely on their own AI models to protect every transaction in real time.

This shift has turned fraud prevention into an AI versus AI contest. On one side, criminals use generative tools, bot networks and deepfakes to bypass traditional controls. On the other, merchants and payment service providers deploy advanced fraud engines that continuously analyse behaviour and risk signals across entire customer journeys.

For high-risk verticals in particular, this evolution raises important questions. How are fraud patterns changing? Which types of attacks are becoming more common? What does an effective, AI-enabled defence actually look like? This article explores the 2026 fraud landscape, explains how modern fraud engines operate, and outlines practical areas of focus for payment and risk teams.

- The 2026 Fraud Landscape – From Manual Attacks to AI-Driven Campaigns

- Bot Attacks, Deepfakes and Synthetic Identities – What They Look Like in Practice

- How Modern AI Fraud Engines Actually Work

- Defensive Layers Against AI-Powered Fraud

- Practical Considerations for Merchants and Payment Teams in 2026

- Data and Metrics That Support Better Outcomes

- Looking Ahead – AI, Regulation and Payment Risk

- Conclusion

- FAQ

The 2026 Fraud Landscape – From Manual Attacks to AI-Driven Campaigns

How Fraud Has Shifted in Recent Years

Historically, many payment fraud cases involved relatively simple tactics: stolen card data, password reuse, and straightforward social engineering. While those threats still exist, several recent trends show that fraud is being industrialised, with automation and AI supporting much larger and more persistent campaigns.

Analyses of digital fraud in 2026 highlight a sustained rise in identity-related threats, especially synthetic identities that mix genuine and fabricated data. At the same time, improvements in real-time payments and online onboarding have given attackers more opportunities to move funds quickly and to open accounts at scale.

As governments respond with stronger fraud strategies and regulatory frameworks, they also stress the importance of technology and data-driven monitoring as part of their approach. For example, the UK Government’s Fraud Strategy for 2026–2029 emphasises disruption, safeguarding and coordinated use of new technologies across sectors.

Key AI-Driven Threats in Payments

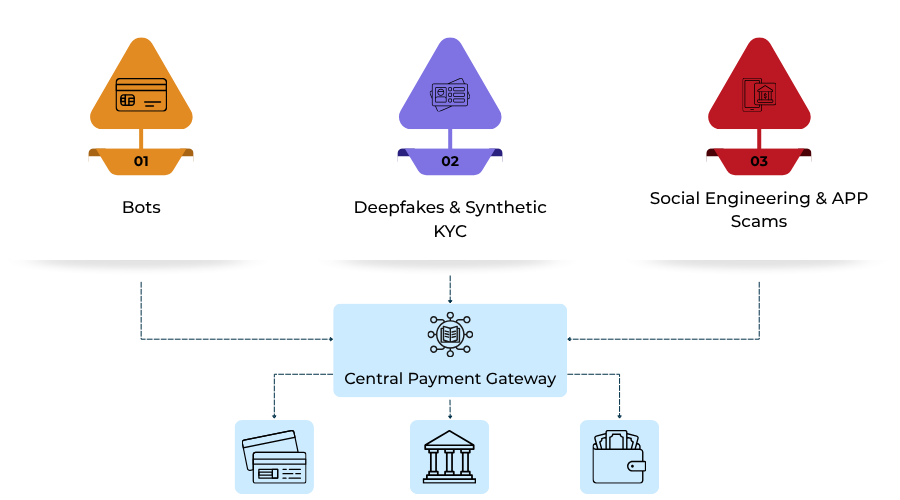

In 2026, several AI-enabled fraud patterns have become especially relevant for payment and risk teams:

- Bot-driven card testing and enumeration – automated tools probe large volumes of payment credentials, testing small transactions and adjusting behaviour in response to basic defences like rate limits.

- Deepfake-assisted impersonation – AI-generated voice and video can support scams that imitate trusted individuals or organisations, including during escalation or manual review processes.

- Synthetic identity fraud – attackers create new, composite identities that gradually build credit and trust before being used for large-scale fraud or chargebacks.

These patterns are not limited to one region or rail. They appear across card payments, account-to-account transfers and digital wallets, and they affect both consumer flows and merchant or PSP onboarding processes.

Bot Attacks, Deepfakes and Synthetic Identities – What They Look Like in Practice

Bot Card Testing and Enumeration

Card testing and BIN-range enumeration are long-standing threats, but the methods used in 2026 look different from older, simpler scripts. Modern bots can imitate aspects of human browsing behaviour, vary devices and IP addresses, and adapt timing patterns to avoid obvious detection.

From a merchant perspective, a card testing wave can lead to:

- Increased operational noise in logs and monitoring tools.

- Potential reputational and scheme risk if the activity is not controlled.

Because these attacks can be distributed across multiple routes and merchants, an individual merchant often sees only a portion of a wider campaign. This is one reason why cross-institution intelligence sharing and network-level monitoring are gaining importance.

Deepfake-Aided KYC and Onboarding Abuse

Deepfake technology and generative AI tools can create realistic identities that challenge traditional documents plus selfie checks. Examples include:

- AI-generated faces used in video or selfie verification flows.

- Manipulated or fabricated ID documents that pass basic image quality checks.

- Synthetic voices used in support or review calls to impersonate customers or company representatives.

These techniques can be applied both to consumer onboarding and to merchant or PSP onboarding, which is critical for high-risk industries such as gambling, foreign exchange or certain digital services.

Synthetic Identities and Long-Game Fraud

Synthetic identity fraud involves building entirely new identities that blend into normal customer portfolios. Attackers may use a mixture of genuine and fictitious data, gradually building a history of small, legitimate transactions before initiating larger fraud events or defaulting.

Investigations into synthetic identity trends emphasise that:

- Many synthetic profiles appear multiple times across different organisations.

- Repeated identity abuse can be distributed over several years.

- Traditional checks focused on “first use” of an identity are often insufficient on their own.

A more effective response requires ongoing verification and analysis of how an identity behaves over time, not just how it looks at the application stage.

How Modern AI Fraud Engines Actually Work

Behavioural, Device and Network Signals

Modern AI fraud engines tend to use a wide range of signals from across the customer lifecycle. Instead of relying solely on static data fields like card number, name and amount, they consider:

- Device and network characteristics including device identifiers, operating system, browser and IP address reputation.

By combining these features, models can identify anomalies that suggest automation or synthetic behaviour, even when each transaction looks legitimate in isolation.

Real-Time Scoring Across the Customer Journey

A second key feature of 2026 fraud engines is real-time, iterative scoring. Instead of performing a single risk check at the moment of payment, many systems now assess risk:

- At login and device recognition stages.

- During browsing, account changes and funding actions.

Risk scores can change as more information becomes available. For example, a returning customer on a trusted device may receive a low-risk score, while a new device, unusual location and altered behaviour in one session might push risk higher, even before a payment is attempted.

These scores are then used by orchestration layers to decide whether to approve, decline, request step-up authentication or route to a different rail or provider.

Governance, Transparency and Oversight

As AI systems become more involved in financial decisions, regulators and policymakers place growing emphasis on governance and transparency. Recent commentary on AI and fraud prevention has highlighted expectations around:

- Documented model objectives, limitations and risk controls.

- Human oversight and the ability to challenge or override automated outcomes.

- Monitoring for bias and unintended consequences in decision-making.

Government strategy papers on fraud and economic crime also reference the need for coordinated use of advanced technologies in a way that remains accountable and proportionate.

Defensive Layers Against AI-Powered Fraud

Strengthening Identity and Onboarding Controls

Given the rise of deepfakes and synthetic identities, many organisations are revisiting how they verify customers and merchants at onboarding. Recommended practices in public and industry guidance include:

- Verifying identity data against multiple independent sources.

- Using liveness detection to ensure that the person present is a real, live individual.

- Applying document forensics to detect manipulation or AI generation.

- Monitoring behaviour after onboarding to identify inconsistencies over time.

These measures support a layered approach, where no single method is considered sufficient on its own.

Adaptive Controls Rather Than One-Size-Fits-All Rules

Static rules that treat every transaction the same can create unnecessary friction for good customers or overlook subtle risk patterns. An alternative is to use adaptive controls, where the level of friction is aligned with the level of risk.

Examples of adaptive measures include:

- Applying stronger authentication only for transactions above certain risk thresholds.

- Adjusting limits and checks based on historical behaviour, device trust and geography.

- Using dynamic routing and challenge flows informed by issuer and scheme behaviour trends.

These approaches support a better balance between fraud prevention and customer experience.

Collaboration and Intelligence Sharing

Many public and private sources underline the value of sharing intelligence about emerging fraud patterns, especially when attacks are distributed across multiple institutions.

This can involve:

- Participation in information sharing arrangements or industry initiatives where legally appropriate.

- Coordinated responses to threats that target particular payment rails, regions or customer segments.

Regulatory discussions on instant payments and authorised push payment fraud also stress that strong real-time monitoring and verification are essential as payment speed increases. ( Source: official EU Instant Payments Regulation pages and legal analyses.)

Practical Considerations for Merchants and Payment Teams in 2026

Questions to Explore with Providers

Merchants and platforms do not need to design all fraud models themselves, but they do need a clear understanding of how their providers approach risk. In 2026, it can be useful to discuss topics such as:

- Which types of AI-driven threats the provider’s systems are designed to address.

- How frequently models are updated and what triggers a review.

- What data is used beyond basic transaction fields.

- How decisions can be explained if there is a dispute or investigation.

These conversations help align expectations and ensure that both parties understand their respective responsibilities.

Data and Metrics That Support Better Outcomes

Fraud engines rely on meaningful data, and decision-making improves when merchants and providers share relevant context. Areas that often benefit from closer attention include:

- Performance metrics – approval rates, decline codes, and dispute ratios broken down by issuer, BIN, geography and channel.

- Customer friction indicators – abandonment at authentication steps, repeated failures, and support contacts.

- Lifecycle information – how customers behave over time, not just during a single transaction.

Monitoring these metrics helps identify when risk controls need adjustment, whether AI models are behaving as expected, and where there may be opportunities to reduce friction without increasing fraud.

Building a Long-Term Risk Strategy

Fraud prevention is not a one-off project. As strategies from governments and regulators show, there is an expectation that organisations will continually improve their controls and adapt to new threats.

A long-term approach typically includes:

- Periodic review of fraud patterns and emerging risks.

- Ongoing training and awareness for internal teams.

- Engagement with policy and industry developments, particularly around instant payments, open banking and AI governance.

For high-risk merchants, this may also involve revisiting how payment partners are selected, how risk responsibilities are allocated, and how quickly responses can be implemented when new threats appear.

Looking Ahead – AI, Regulation and Payment Risk

Public strategies and horizon reports on financial services suggest that the use of AI in fraud prevention will continue to grow, alongside closer regulatory attention. As instant payments and new rails become more widespread, expectations around real-time monitoring and consumer protection are likely to increase.

At the same time, initiatives like the UK Government’s Fraud Strategy 2026–2029 emphasise collaboration between government, law enforcement, industry and civil society. This reflects an understanding that fraud cannot be addressed by any single organisation in isolation.

For merchants and payment providers, the implication is clear: AI is becoming part of the basic toolkit for managing payment risk, but it needs to be combined with good data, responsible governance and active engagement with the wider fraud ecosystem.

Conclusion

In 2026, payment fraud is no longer just a matter of spotting obviously suspicious transactions. It involves understanding how AI is being used by attackers, recognising the signs of synthetic and automated activity, and building layered defences that can respond in real time.

Modern fraud engines are designed to meet this challenge by analysing behaviour, devices and networks, and by applying adaptive controls that respond to changing risk. However, they work best when merchants and payment teams provide good data, ask informed questions, and view fraud prevention as a continuous, collaborative effort.

By treating fraud as an AI-era challenge—rather than a static rules problem—organisations can be better prepared for the next wave of threats and can help protect both their customers and their payment relationships as the landscape continues to evolve.

FAQ

1. What does “AI vs AI in payments” actually mean?

AI vs AI in payments describes a situation where fraudsters use artificial intelligence to scale and disguise attacks, while payment providers and merchants use their own AI systems to detect and prevent those attacks in real time.

2. How is payment fraud different in 2026 compared with previous years?

Payment fraud in 2026 is more automated, identity-focused and distributed than in previous years, with attackers using tools like bots, deepfakes and synthetic identities to bypass traditional controls and exploit real-time payment rails.

3. What is synthetic identity fraud in the context of payments?

Synthetic identity fraud involves creating new identities using a mixture of genuine and invented information, building up a history of apparently normal behaviour, and then using those identities to commit fraud or default on obligations.

4. Why are deepfakes a problem for KYC and onboarding?

Deepfakes can generate convincing images, video and audio that make it harder for simple documents plus selfie checks to distinguish real applicants from fabricated ones, challenging traditional KYC and onboarding processes.

6. What kind of data do modern AI fraud engines use?

Modern AI fraud engines typically use behavioural data, device and network information, and network relationships between accounts and instruments, in addition to core transaction fields such as amount, currency and merchant details.

7. How does real-time risk scoring improve fraud prevention?

Real-time risk scoring allows systems to assess and update risk levels across the customer journey at login, during browsing and at payment, so that decisions on approvals, step-up checks or blocks can be made based on the latest available information.

8. Why is model governance important for AI-based fraud tools?

Model governance is important because regulators expect AI systems used in financial services to be accountable, monitored and explainable, with clear documentation and appropriate human oversight of automated decisions.

9. What practical steps can merchants take to support AI fraud prevention?

Merchants can support AI fraud prevention by sharing relevant behavioural and device data with providers, monitoring key metrics such as approval and dispute rates, and regularly reviewing how their risk controls impact both fraud and customer experience.

10. How do adaptive controls help balance fraud prevention and conversion?

Adaptive controls adjust the level of friction, such as additional authentication, according to the assessed risk of each transaction, helping to protect against fraud while reducing unnecessary inconvenience for low-risk customers.